Why Vital Signs Matter for Autonomous Vehicle Safety

A research-focused analysis of why vital signs autonomous vehicle safety is becoming central to driver monitoring, fallback readiness, and in-cabin sensing design.

Why Vital Signs Matter for Autonomous Vehicle Safety

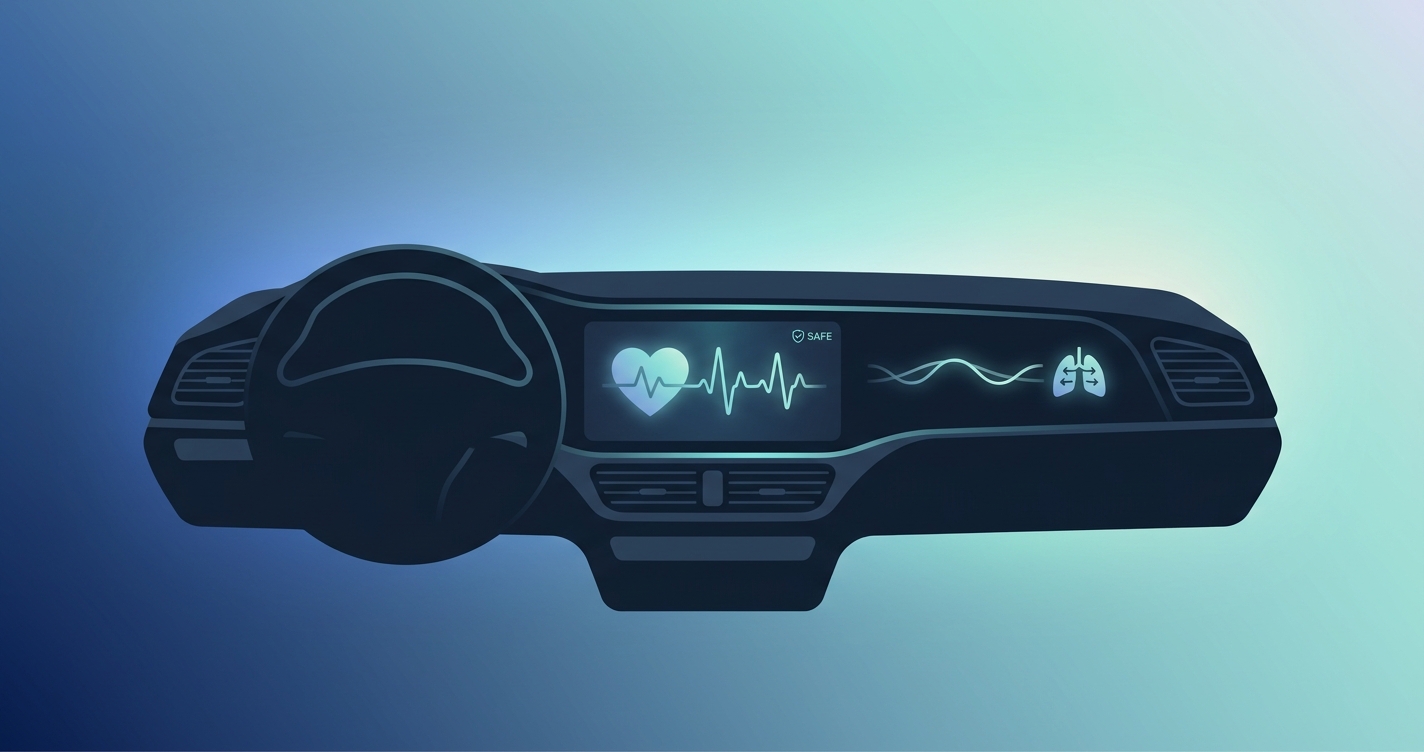

Autonomous driving programs have spent years refining perception stacks, redundancy logic, and takeover alerts. What has become harder to ignore is the human in the cabin. That is why vital signs autonomous vehicle safety has become a serious engineering question rather than a speculative add-on. If an assisted or automated vehicle needs to know whether a driver can take back control, then gaze alone is not the whole story. Heart rate, respiration, heart-rate variability, and broader signs of physiological strain offer another layer of evidence about whether the person behind the wheel is alert, overloaded, impaired, or in medical distress.

"Takeover times ranged from 1.9 to 25.7 seconds" in noncritical automated-driving transitions, according to Alexander Eriksson and Neville A. Stanton's 2017 Human Factors study at the University of Southampton. That spread is exactly why cabin systems need more context about human readiness.

Why vital signs matter for autonomous vehicle safety now

The shift is happening for two reasons at once. First, advanced driver assistance and Level 2+ systems still depend on a human fallback driver in many scenarios. Second, regulators and safety programs are pushing vehicle makers toward direct observation of the person in the seat.

NHTSA's 2024 Report to Congress on Advanced Impaired Driving Prevention Technology says alcohol-impaired driving was linked to 12,429 traffic deaths in 2023, about 30% of all traffic fatalities, with societal costs estimated at $165 billion. That report is about impairment broadly, not autonomous driving alone, but the logic carries over: safer vehicles increasingly need passive ways to detect whether the driver is fit to operate the car.

Euro NCAP's 2026 direction points the same way. The program's updated occupant and driver monitoring approach puts more weight on continuous monitoring of distraction, drowsiness, impairment, and unresponsiveness. Once that becomes part of the scoring conversation, OEMs and Tier-1 suppliers stop treating in-cabin sensing as a futuristic experiment. It becomes platform planning.

Vital signs do not replace camera-based driver monitoring. They make it more useful.

What physiological signals add beyond face and gaze alone

A camera can show that a driver is looking forward. It may not show whether that driver is stressed, drifting toward fatigue, or experiencing a cardiac problem. Physiological signals help fill that gap.

- Heart rate can reveal acute stress, workload spikes, or sudden medical instability

- Respiration rate can reflect drowsiness, panic, heavy cognitive load, or respiratory compromise

- Heart-rate variability can provide another view into fatigue and autonomic nervous system activity

- Multi-signal trends are often more useful than any single alert threshold

That matters in automated driving because fallback readiness is not binary. A person can be technically awake and still not be ready.

Comparison of cabin-safety approaches

| Approach | What it sees | What it misses | Best use in autonomous vehicle safety |

|---|---|---|---|

| Eye and head tracking | Gaze direction, distraction, eyelid closure, head pose | Internal physiological stress or silent medical events | Baseline DMS and takeover-readiness monitoring |

| Steering and vehicle behavior signals | Lane variation, steering corrections, pedal behavior | Early fatigue, subtle impairment, passenger/driver condition | Supplemental indirect monitoring |

| Contact wearables or seat sensors | Heart rate and some biosignals | Low adoption, user friction, inconsistent use | Controlled fleets or pilots |

| Contactless vital-sign sensing | Heart rate, respiration, HRV trends without wearables | Depends on sensor placement, motion handling, validation | Strong complement to camera-based in-cabin monitoring |

| Multi-sensor fusion | Behavioral plus physiological context | Higher integration and validation burden | Most robust path for future smart cabins |

Contactless sensing is making physiological monitoring practical in the cabin

A few years ago, cabin vital signs sounded unrealistic outside research labs. That is changing.

Fraunhofer IIS said in 2024 that it had developed a contactless in-cabin system using a radar sensor integrated into the headliner to measure heart rate, breathing rate, and heart-rate variability. The point was not just comfort. Fraunhofer explicitly framed it as a road-safety technology that could detect stress or fatigue and remain robust to clothing and typical movement.

Another useful example comes from Kaiwen Guo, Tianqu Zhai, Manoj H. Purushothama, Alexander Dobre, Shawn Meah, Elton Pashollari, Aabhaas Vaish, Carl DeWilde, and Mohammed N. Islam. Their Applied Sciences paper on contactless in-vehicle monitoring with a near-infrared time-of-flight camera reported highway heart-rate success rates of 82% for passengers and 71.9% for drivers, with respiration measurements showing a mean deviation of -1.4 breaths per minute. Those figures are research results, not a production guarantee, but they show that road-usable contactless monitoring is no longer theoretical.

The takeaway is simple: the cabin is becoming measurable.

Where vital signs fit in autonomous safety workflows

Autonomous vehicle teams usually talk about handoff, fallback, and fail-operational design. Vital signs fit into all three.

1. Handoff readiness

Eriksson and Stanton's work showed how wide takeover times can be when drivers are pulled back from automated control. That alone should make engineers skeptical of crude readiness assumptions. If a vehicle knows the driver has stable gaze but also rising respiratory irregularity, reduced HRV, or signs of fatigue, it can make a more conservative handoff decision.

2. Drowsiness and cognitive overload

PERCLOS, introduced by Wierwille and Ellsworth at Virginia Tech in 1994, remains foundational because eyelid behavior is one of the clearest visible signs of drowsiness. But drowsiness is not purely visual. Physiological cues can strengthen confidence in the alert, especially during long-haul, monotonous, or nighttime driving.

3. Medical-event response

This is the part many teams still underplay. An autonomous or partially automated vehicle may one day be the first system to notice a driver is in real medical trouble. A sudden drop in responsiveness paired with abnormal heart-rate or breathing patterns is a different safety case than ordinary distraction. In those moments, the vehicle may need to shift into a minimal-risk maneuver, alert a fleet operator, or support emergency response workflows.

Industry applications

Passenger vehicle OEMs

Passenger-car programs are under pressure to combine safety ratings, premium-cabin features, and future automation roadmaps. For them, vital-sign sensing can support drowsiness detection, takeover-readiness logic, and differentiated in-cabin intelligence without requiring the driver to wear anything.

Robotaxi and autonomous shuttle programs

These programs care about edge cases. A remote operator or support team needs better information than "occupant not responding." Physiological context could help distinguish a sleeping rider, an intoxicated rider, and a rider in distress.

Commercial fleets

Fleet safety teams already think in terms of fatigue, duty cycles, and incident prevention. Vital-sign monitoring adds a layer of driver-condition data that may matter even more in long-haul trucking, mining, and heavy equipment than in consumer vehicles.

Tier-1 suppliers and cockpit-platform teams

For suppliers, the biggest issue is architecture. Can the same in-cabin hardware support distraction monitoring today and richer health-state inference tomorrow? That question is driving interest in sensor fusion, edge processing, and flexible software stacks.

Current research and evidence

The evidence base is still developing, but a few studies and policy documents explain why this topic keeps gaining momentum.

- Wierwille and Ellsworth's Virginia Tech work on PERCLOS helped establish eyelid closure as a practical fatigue indicator for camera-based monitoring.

- Eriksson and Stanton at the University of Southampton showed in 2017 that takeover response times in automated-driving transitions varied from 1.9 to 25.7 seconds, with secondary-task engagement slowing response.

- NHTSA's 2024 impaired-driving report makes clear that passive driver-state detection is becoming a federal safety priority, even though the agency is not prescribing one cabin architecture.

- Fraunhofer IIS demonstrated in 2024 that radar-based contactless monitoring can capture heart rate, breathing rate, and HRV from the vehicle cabin.

- Guo and colleagues showed that near-infrared time-of-flight imaging can estimate heart rate and respiration in moving vehicles, which is one of the technical barriers that long kept this field in the lab.

Selected evidence at a glance

| Source | Institution | Key point for autonomous safety |

|---|---|---|

| Wierwille and Ellsworth (1994) | Virginia Tech | Eyelid closure metrics like PERCLOS remain core to drowsiness monitoring |

| Eriksson and Stanton (2017) | University of Southampton | Takeover times vary widely, so fallback readiness needs richer context |

| NHTSA Report to Congress (2024) | U.S. DOT / NHTSA | Passive impairment detection is moving up the federal safety agenda |

| Fraunhofer IIS (2024) | Fraunhofer IIS | Radar-based contactless sensing can monitor HR, breathing, and HRV in-cabin |

| Guo et al. | Applied Sciences | ToF-based systems can estimate HR and respiration in highway driving scenarios |

The future of autonomous-vehicle safety will be more physiological

The next phase of in-cabin safety probably will not look like one sensor replacing another. It will look like fusion.

A mature autonomous-vehicle cabin will likely combine gaze tracking, head pose, context from the driving stack, and passive vital-sign estimation. That mix is more useful than a single distraction warning because it can answer a harder question: not just whether the driver looked away, but whether the human is genuinely able to resume control.

There is also a business reason this matters. As automated features expand, every awkward transition, false alert, or missed medical event becomes a trust problem. Human-state monitoring is how vehicle makers shrink that gap.

If you want more context on adjacent topics, see our analysis of how drowsiness detection systems read vital signs and our breakdown of what automotive rPPG looks like inside the cabin. For teams planning around regulation, our overview of driver monitoring system regulations for 2026 is a useful companion.

Frequently Asked Questions

Why are vital signs important if autonomous vehicles already use driver-facing cameras?

Driver-facing cameras are excellent for gaze, head pose, and eyelid monitoring, but they do not capture the full physiological state of the person in the seat. Vital signs add context about stress, fatigue, respiratory change, and possible medical distress.

Which vital signs are most relevant for autonomous vehicle safety?

Heart rate, respiration rate, and heart-rate variability are the most commonly discussed because they can reflect workload, drowsiness, stress, and some acute health issues. In practice, their value is highest when combined with camera-based behavioral signals.

Are cabin vital-sign systems limited to fully autonomous vehicles?

No. They may be useful even earlier in Level 2 and Level 2+ systems, where the vehicle still depends on a human fallback driver. In those programs, understanding driver readiness may be even more important than in a true driverless deployment.

Will contactless vital-sign sensing replace traditional driver monitoring systems?

Probably not. The more realistic path is fusion. Camera-based DMS remains the foundation for distraction and drowsiness monitoring, while contactless vital-sign sensing adds physiological context that improves decision-making.

The cabin is becoming a health-and-safety sensing environment, not just a place where software watches the road. Circadify is building contactless sensing capabilities for automotive and cabin-monitoring programs, including custom approaches for teams exploring the next generation of in-cabin safety workflows. Learn more at circadify.com/custom-builds/automotive-cabin.